A few days ago I mentioned that I had started doing some static file performance runs on a handful of web servers. Here are the results!

Please refer to the previous article on setup details for info on exactly what and how I’m testing this time. Benchmark results apply to the narrow use case being tested and this one is no different.

The results are ordered from slowest to fastest peak numbers produced by each server.

9. Monkey (0.9.3-1 debian package)

The monkey server was not able to complete the runs so it is not included in the graphs. At a concurrency of 1, it finished the 30 minute run with an average of only 133 requests/second, far slower than any of the others. With only two concurrent clients it started erroring out on some requests so I stopped testing it. Looks like this one is not ready for prime time yet.

8. G-WAN (3.3.28)

I had seen some of the performance claims around G-WAN so decided to try it, even though it is not open source. All the tests I’d seen of it had been done running on localhost so I was curious to see how it behaves under a slightly more realistic type of load. Turns out, not too well. Aside from monkey, it was the slowest of the group.

I was surprised to see the event MPM do so badly. To be fair, it should do better against a benchmark which has large numbers of mostly idle clients which is not what this particular benchmark tested. At most points in the test it was also the highest consumer of CPU.

6. lighttpd (1.4.28-2+squeeze1 debian package)

Here we get to the first of the serious players (for this test scenario). lighttpd starts out strong up to 3 concurrent clients. After that it stops scaling up so it loses some ground in the final results. Also, lighttpd is the lightest user of CPU of the

group.

5. nginx (0.7.67-3+squeeze2 debian package)

The nginx throughput curve is just about identical to lighttpd, just shifted slightly higher. The CPU consumption curve is also almost identical. These two are twins separated at birth. While nginx uses a tiny bit more CPU than lighttpd, it makes up for it with higher throughput.

4. cherokee (1.0.8-5+squeeze1 debian package)

Cherokee just barely edges out nginx at the higher concurrencies tested so it ends up fourth. To be fair, nginx was faster than cherokee at most of the lower concurrencies though. Note, however, that cherokee uses quite a bit more CPU to deliver its numbers so it is not as efficient as nginx.

Apache third, really? Yes but only because this ranking is based on the peak numbers of each server. With worker mpm, apache starts out quite a bit behind lighttpd/nginx/cherokee at lower client concurrencies. However, as those others start to stall as concurrency increases, apache keeps going higher. Around five concurrent clients it catches up to lighttpd and around eight clients it catches up to nginx and cherokee. At ten

it scores a throughput just slightly above those two, securing third place in this test. Looking at CPU usage tough, at that point it has just about maxed out the CPU (about 1% idle) making it the highest CPU consumer of this group so it is not very efficient.

2. varnish (2.1.3-8 debian package)

Varnish is not really a web server, of course, so in that sense it is out of place in this test. But it can serve (cached) static files and has been included in other similar performance tests so I decided to include it here.

Varnish throughput starts out quite a bit slower than nginx, right on par with lighttpd and cherokee and lower concurrencies. However, varnish scales up beautifully. Unlike all the previous servers, its throughput curve does not flatten out as concurrency increases in this

test, it keeps going higher. Around four concurrent users it surpasses nginx and only keeps going higher all the way to ten.

Varnish was able to push network utilization to 90-94%. The only drawback is that delivering its performance does use up a lot of CPU… only Apache used more CPU than varnish in this test. At ten clients, there is only 9% idle CPU left.

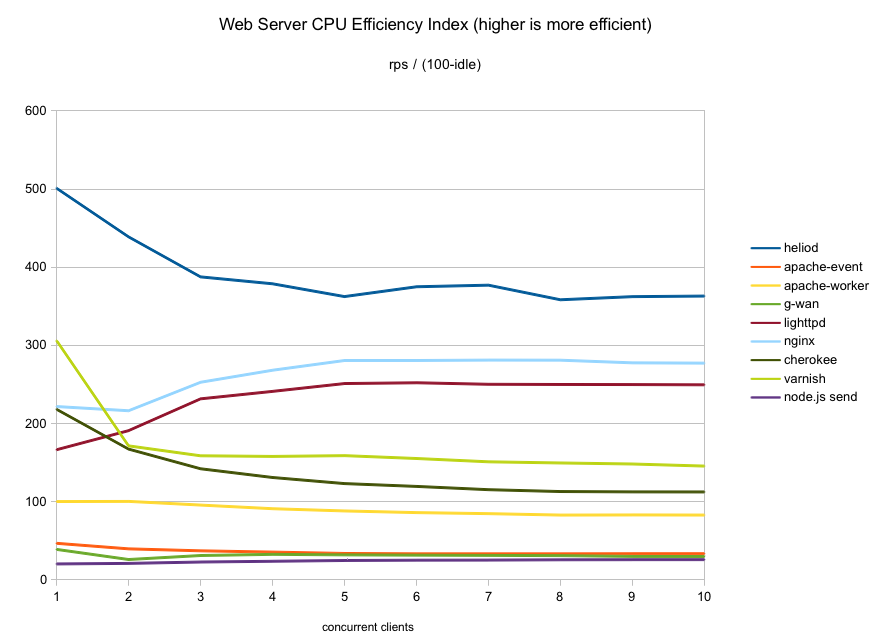

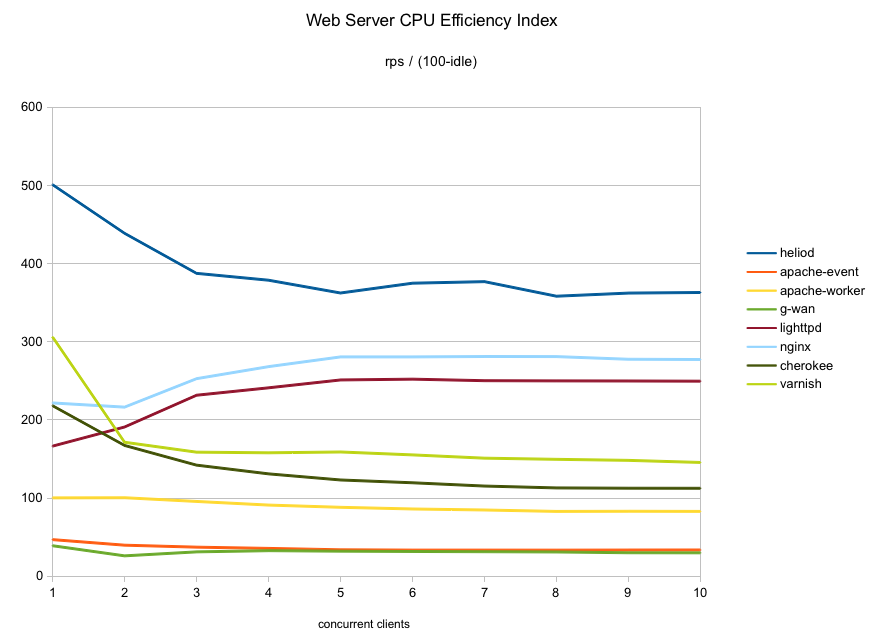

heliod had the highest throughput at every point tested in these runs. It is slightly faster than nginx at sequential requests (one client) and then pulls away.

heliod is also quite efficient in CPU consumption. Up to four concurrent clients it is the lightest user of CPU cycles even though it produced higher throughput than all the others. At higher concurrencies, it used slightly more CPU than nginx/lighttpd although it makes up for it with far higher throughput.

heliod was also the only server able to saturate the gigabit connection (at over 97% utilization). Given that there is 62% idle CPU left at that point, I suspect if I had more bandwidth heliod might be able to score even higher on this machine.

These results should not be much of a surprise… after all heliod is not new, it is the same code that has been setting benchmark records for over ten years (it just wasn’t open source back then). Fast then, still fast today.

If you are running one of these web servers and using varnish to accelerate it, you could switch to heliod by itself and both simplify your setup and gain performance at the same time. Food for thought!

All right, let’s see some graphs..

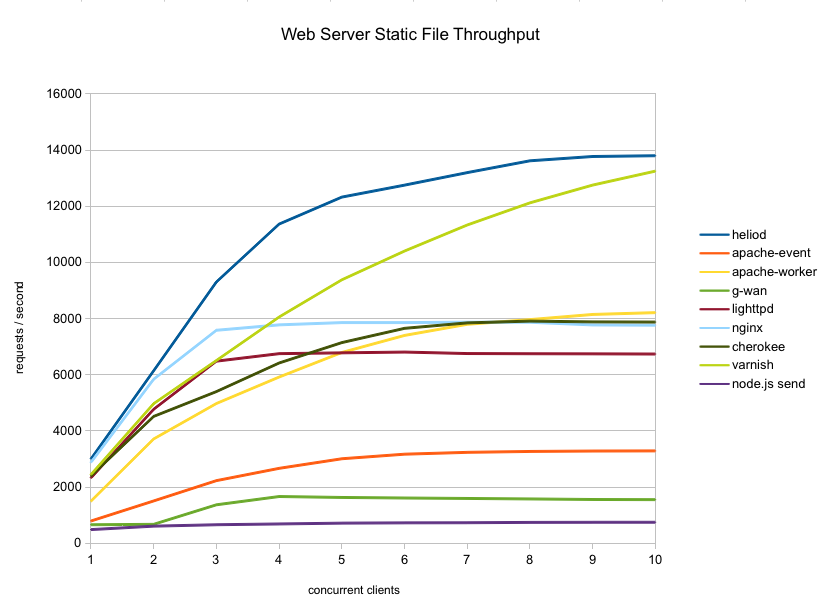

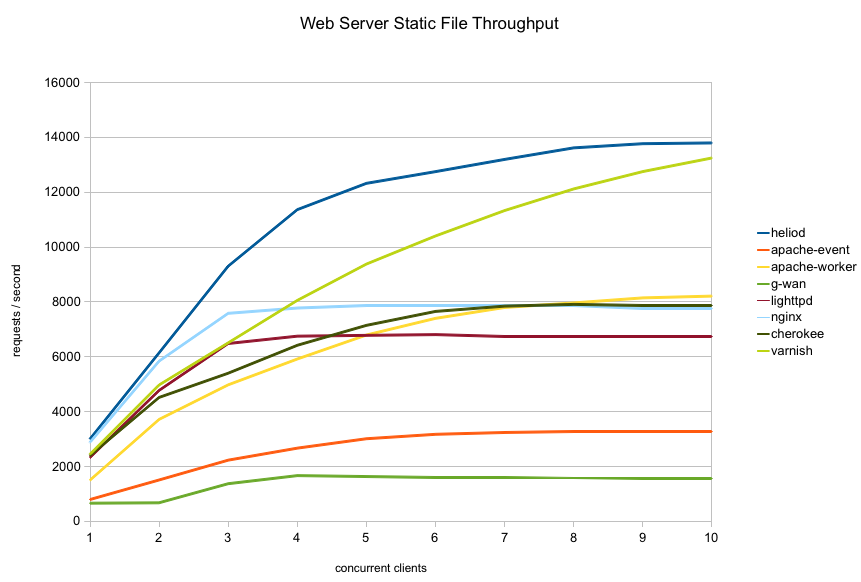

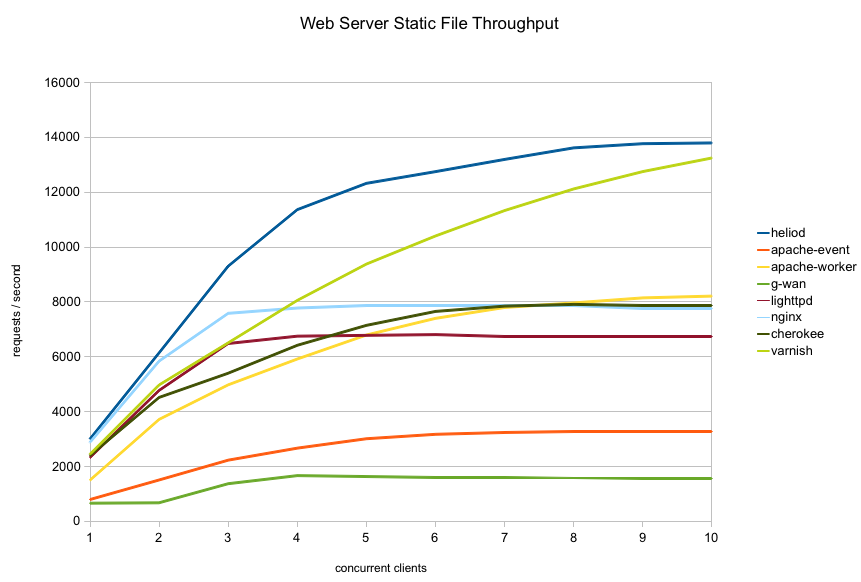

First, here is the overall throughput graph for all the servers tested:

As you can see the servers fall into three groups in terms of throughput:

- apache-event and g-wan are not competitive in this crowd

- apache-worker/nginx/lighttpd/cherokee are quite similar in the middle

- varnish and heliod are in a class of their own at the high end

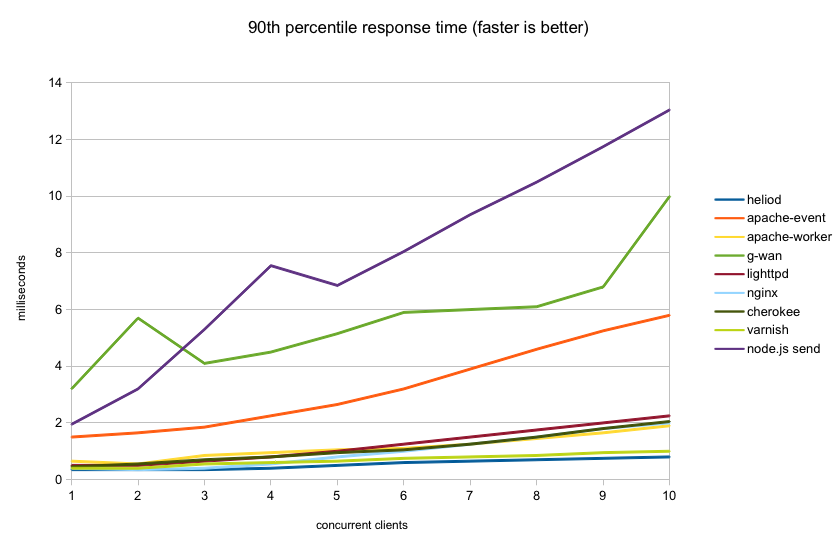

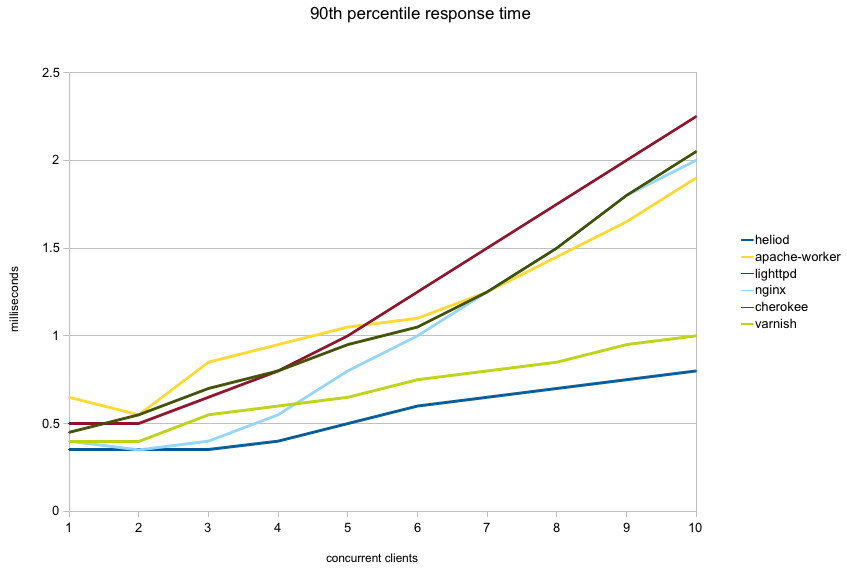

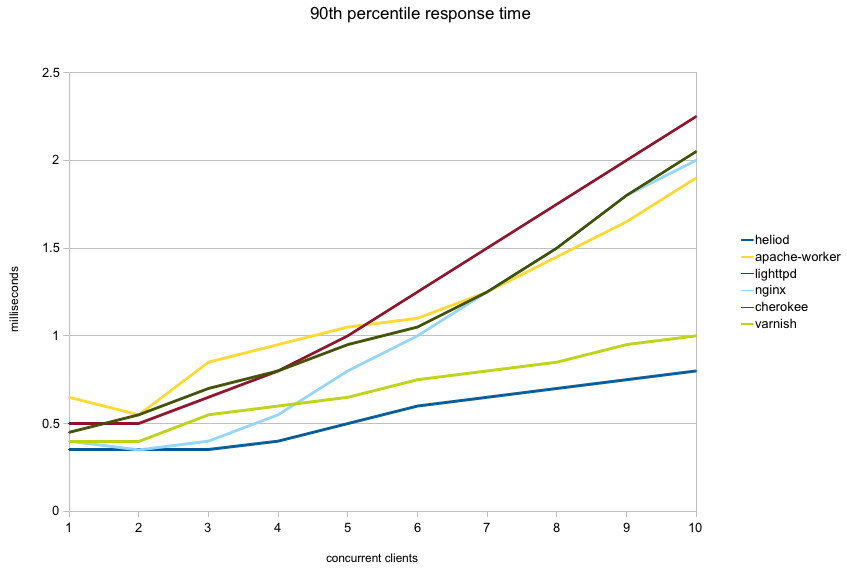

Next graph shows the 90th percentile response time for each server. That is, 90 percent of all requests completed in this time or less. I left out apache-event and g-wan from the graph to avoid compressing the more interesting part of the graph:

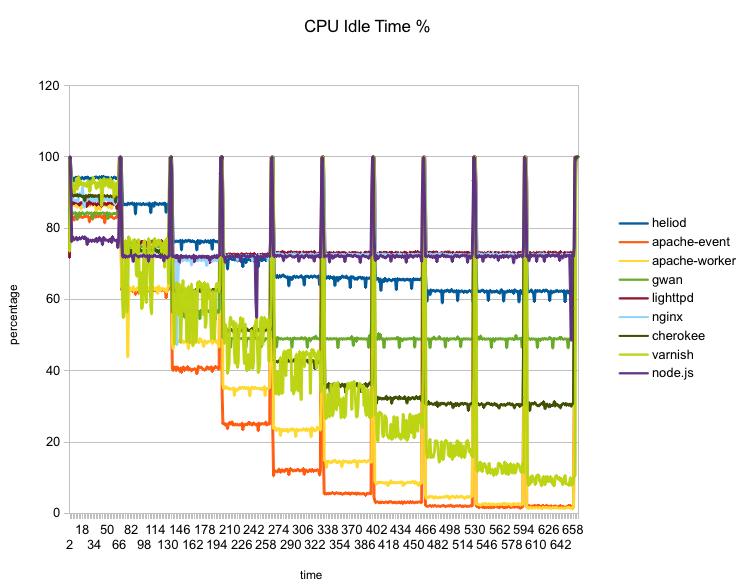

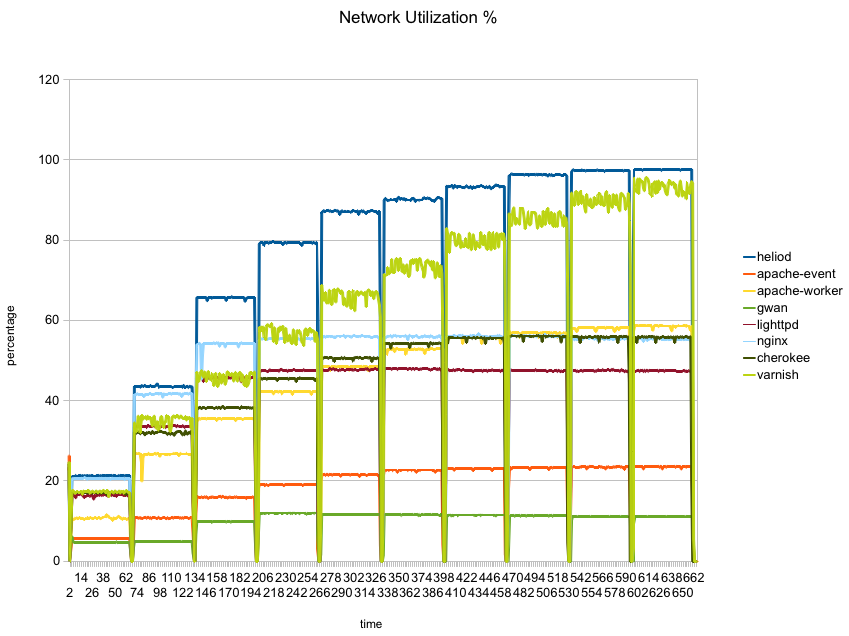

The next graph shows CPU idle time (percent) for each server through the run. The spikes to 100% between each step are due to the short idle interval between each run as faban starts the next run.

The two apache variants (red and orange) are the only ones who maxed out the CPU. Varnish (light green) also uses quite a bit of CPU and comes close (9% idle). On the other side, lighttpd (dark red) and nginx (light blue) put the least load on the CPU with about 72% idle.

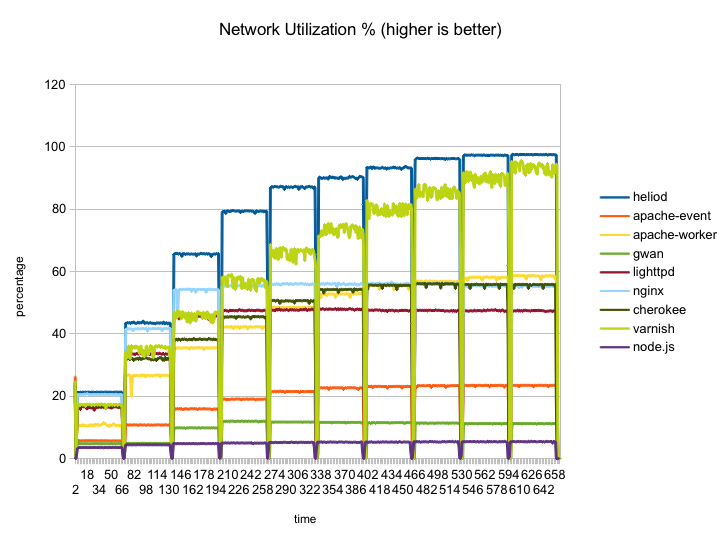

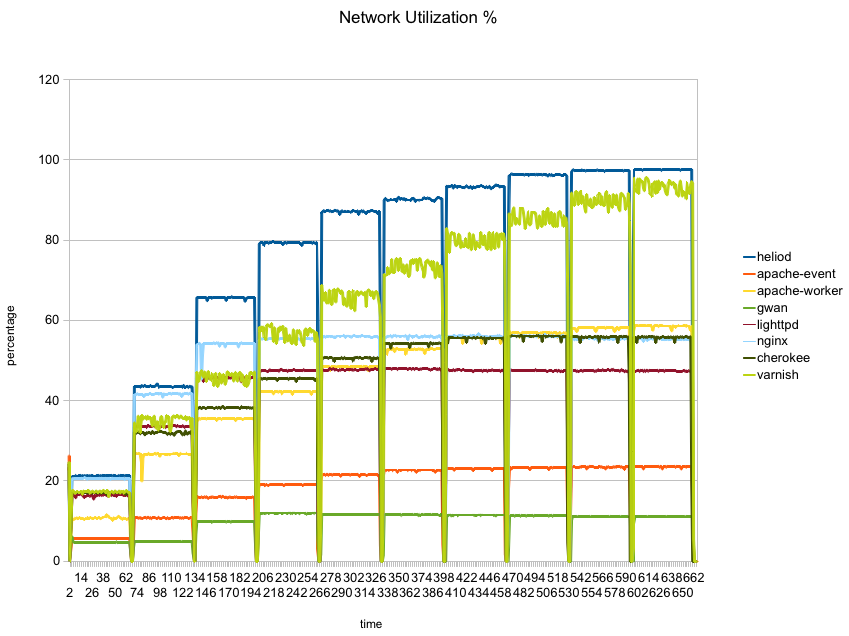

Finally, the next graph shows network utilization percentage of the gigabit interface:

Here heliod (blue) is the only one which manages to saturate the network, with varnish coming in quite close. None of the others manage to reach even 60% utilization.

So there you have it… heliod can sustain far higher throughput than any of the popular web servers in this static file test and it can do so efficiently, saturating the network on a low power two core machine while leaving plenty of CPU idle. It even manages to sustain higher throughput than varnish which specializes in caching static content efficiently and is not a full featured web server.

Of course, all benchmarks are by necessity artificial. If any of the variables change the numbers will change and the rankings may change. These results are representative of the exact use case and setup I tested, not necessarily of any other. Again, for details on what and how I tested, see my previous article.

I hope to test other scenarios in the future. I’d love to also test on a faster CPU with lots of cores, unfortunately I don’t own such hardware so it is unlikely to happen.

Finally, I set up a github repository fabhttp which contains:

- source code of the faban driver used to run these tests

- dstat/nicstat data collected during the runs (used to generate the graphs above)

- additional graphs generated by faban for every individual run

And here’s the D7100 comparison (ISO 4000):

And here’s the D7100 comparison (ISO 4000): And the D7100 goes up to ISO 6400 without much degradation so it would’ve worked in even slightly less light!

And the D7100 goes up to ISO 6400 without much degradation so it would’ve worked in even slightly less light!