I love my zfs file server… but as always with such things, storage brings an accumulation of duplicates. During a cleaning binge earlier this year I wrote a little tool to identify these duplicates conveniently. For months now I’d been meaning to clean up the code a bit and throw together some documentation so I could publish it. Well, finally got around to it and dupd is now up on github.

Before writing dupd I tried a few similar tools that I found on a quick search but they either crashed or were unspeakably slow on my server (which has close to 1TB of data).

Later I found some better tools like fdupes but by then I’d mostly completed dupd so decided to finish it. Always more fun to use one’s own tools!

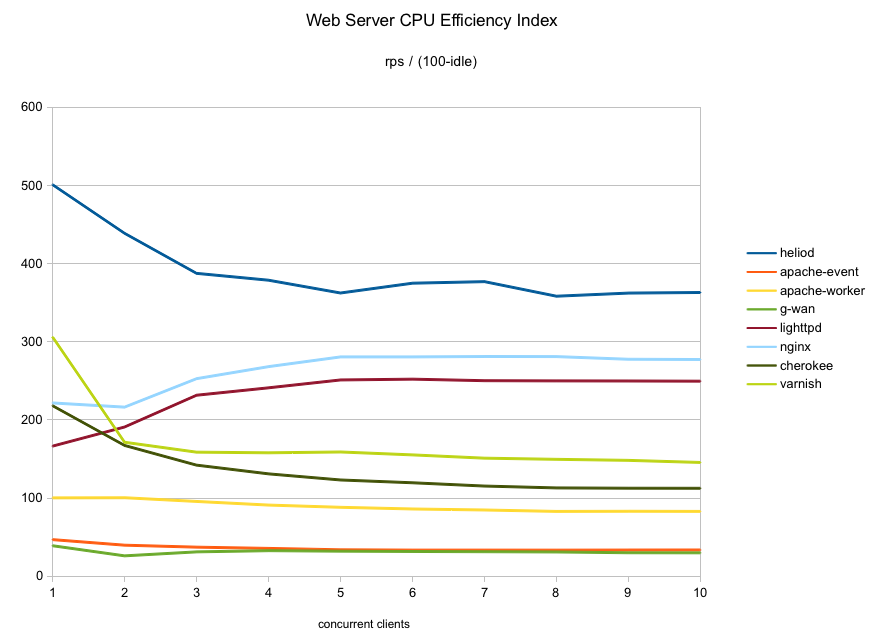

I’m always interested in performance so can’t resist the opportunity to do some speed comparisons. I also tested fastdup.

Nice to see that dupd is the fastest of the three on these (fairly small) data sets (I did not benchmark my full file server because even with dupd it takes nearly six hours for a single run).

There is no result for fastdup on the Debian /usr scan because it hangs and does not produce a result (unfortunately fastdup is not very robust and looks like it hangs on symlinks… so while it is fast when it works, it is not practical for real use yet).

The times displayed on the graph were computed as follows: I ran the command once to warm up the cache and then ran it ten times in a row. I discarded the two fastest and two slowest runs and averaged the remaining six runs.