It has been over three years since I did a performance comparison of a group of duplicate file finder utilities. Having recently released dupd 1.7, I finally took some time to refresh my benchmarks.

The Contenders

This time around I tested the following utilities:

- dupd 1.7

- jdupes 1.10.4 (a new, improved fdupes, actively maintained)

- rdfind 1.3.5 (the runner-up from my previous comparison)

- fdupes 1.6.1 (the old classic, although no longer very maintained)

- rmlint 2.7.0 (rewritten since my last comparison, now much faster)

- duff 0.5.2 (found it in the debian packages so why not…)

- fslint 2.42-2 (found it in the debian packages so why not…)

I had also intended to test the following, but these crash during runs so didn’t get any numbers: dups, fastdup, identix, py_duplicates.

Based on these and the previous results, I think going forward I’ll only test these: dupd, jdupes, rmlint and rdfind.

If anyone knows of another duplicate finder with solid performance worth comparing, please let me know!

The Files

The file set has a total of 154205 files. Of these, 18677 are unique sizes and 1216 were otherwise ignored (zero-sized or not regular files). This leaves 134312 files for further processing. Of these, there are 44926 duplicates in 13828 groups (and thus, 89386 unique files).

The files are all “real” files. That is, they are all taken from my home file server instead of artificially constructed for the benchmark. There is a mix of all types of files such as source code, documents, images, videos and other misc stuff that accumulates on the file server.

In the past I’ve generally focused on testing on SSD media only, as that’s what I generally use myself. To be more thorough, this time I installed a HDD on the same machine and duplicated the exact same set of files on both devices.

The cache

Of course, when a process reads file content it doesn’t necessarily trigger a read from the underlying device, be it SSD or HDD, because the content may already be in the file cache (and often is).

This time I’ve run each utility/media combination twice. Once where the file cache is cleared prior to every run and another where the cache is left undisturbed from run to run.

In my experience, the warm cache runs are more representative of real world usage because when I’m working on duplicates I run the tool many times as I clean up files. For the sake of more thorough results, I’ve reported both scenarios.

The methodology

For each tool/media (SSD and HDD) combination, the runs were done as follows:

- Clear the filesystem cache (echo 3 > /proc/sys/vm/drop_caches).

- Run the scan once, discarding the result.

- Repeat 5 times:

- For the no-cache runs, clear the cache again.

- Run and time the tool.

- Report the average of the above five runs as the result.

The command lines and individual run times are included at the bottom of this article.

Results

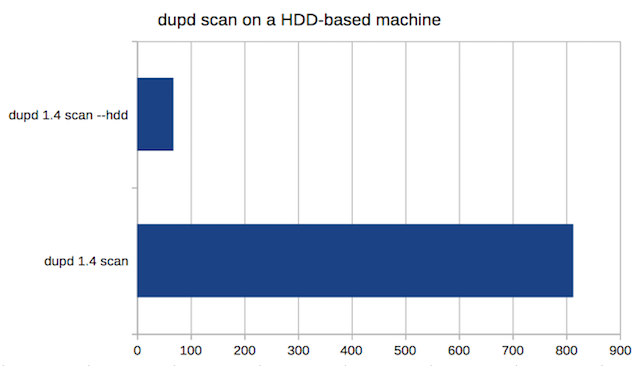

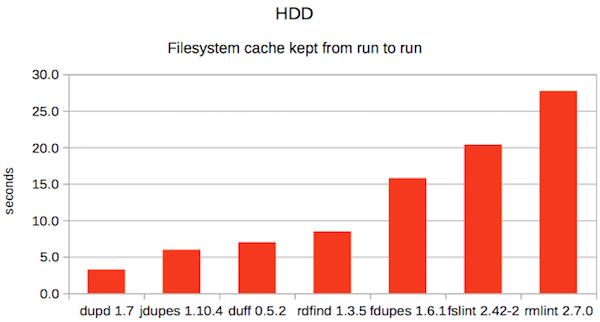

1. HDD with cache

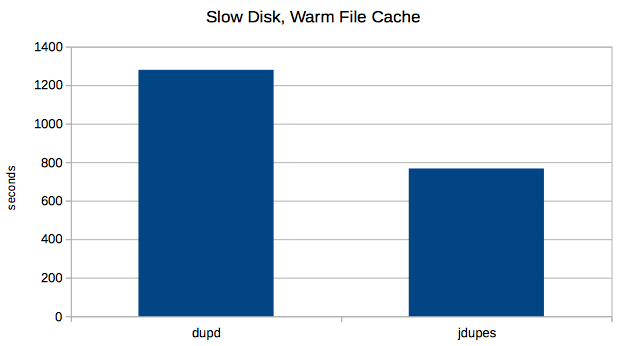

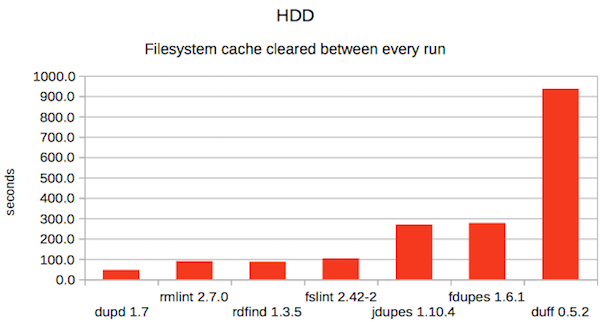

2. HDD without cache

2. HDD without cache

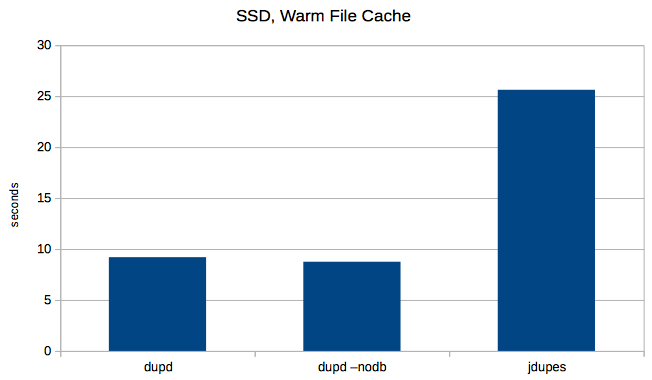

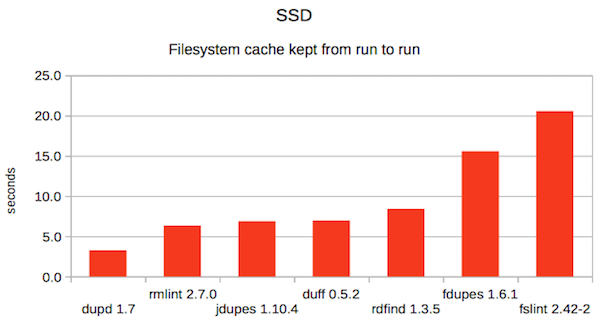

3. SSD with cache

3. SSD with cache

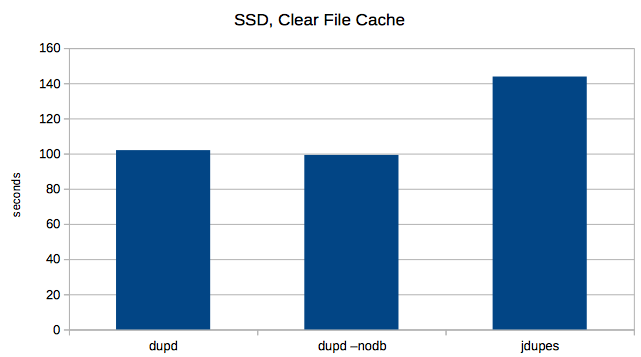

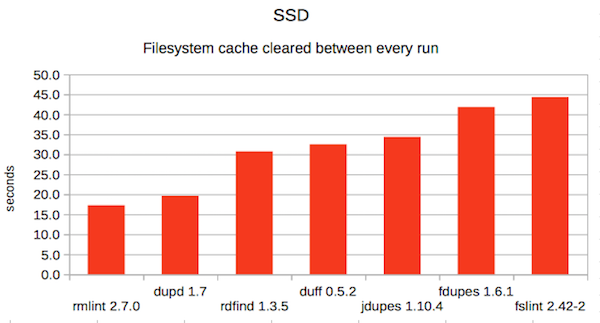

4. SSD without cache

4. SSD without cache

Summary

Summary

As you can see above, the ranking varies depending on each scenario. However, I’m happy to see dupd is the fastest in three of four scenarios and a very close second in the fourth.

To conclude with some kind of ranking, let’s look at the average finishing position of each tool:

| Tool | aveRAGE ranking | |

|---|---|---|

| dupd | 1.3 | 1, 1, 1, 2 |

| rmlint | 3.0 | 2, 7, 1, 2 |

| jdupes | 3.8 | 3, 2, 5, 5 |

| rdfind | 3.8 | 5, 4, 3, 3 |

| duff | 4.5 | 4, 3, 4, 7 |

| fdupes | 5.8 | 6, 5, 6, 6 |

| fslint | 6.0 | 7, 6, 7, 4 |

The Raw Data

-----[ rmlint : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 92.07 Running 5 times (timeout=3600): rmlint -o fdupes /hdd/files Run 0 took 27.83 Run 1 took 27.75 Run 2 took 27.59 Run 3 took 27.75 Run 4 took 27.66 AVERAGE TIME: 27.716 -----[ jdupes : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 268.6 Running 5 times (timeout=3600): jdupes -A -H -r -q /hdd/files Run 0 took 5.96 Run 1 took 5.94 Run 2 took 5.96 Run 3 took 5.93 Run 4 took 5.99 AVERAGE TIME: 5.956 -----[ dupd-hdd : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 46.78 Running 5 times (timeout=3600): dupd scan -q -p /hdd/files Run 0 took 3.24 Run 1 took 3.29 Run 2 took 3.21 Run 3 took 3.21 Run 4 took 3.25 AVERAGE TIME: 3.24 -----[ rdfind : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 90.25 Running 5 times (timeout=3600): rdfind -n true /hdd/files Run 0 took 8.52 Run 1 took 8.49 Run 2 took 8.53 Run 3 took 8.48 Run 4 took 8.41 AVERAGE TIME: 8.486 -----[ fslint : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 103.64 Running 5 times (timeout=3600): findup /hdd/files Run 0 took 20.38 Run 1 took 20.4 Run 2 took 20.36 Run 3 took 20.36 Run 4 took 20.39 AVERAGE TIME: 20.378 -----[ fdupes : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 278.11 Running 5 times (timeout=3600): fdupes -A -H -r -q /hdd/files Run 0 took 15.76 Run 1 took 15.78 Run 2 took 15.72 Run 3 took 15.74 Run 4 took 15.88 AVERAGE TIME: 15.776 -----[ duff : CACHE KEPT : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 935.51 Running 5 times (timeout=3600): duff -r -z /hdd/files Run 0 took 7.03 Run 1 took 7.01 Run 2 took 6.98 Run 3 took 6.99 Run 4 took 6.99 AVERAGE TIME: 7 -----[ rmlint : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 90.59 Running 5 times (timeout=3600): rmlint -o fdupes /hdd/files Run 0 took 89.86 Run 1 took 89.4 Run 2 took 90.44 Run 3 took 89.87 Run 4 took 90.84 AVERAGE TIME: 90.082 -----[ jdupes : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 269.69 Running 5 times (timeout=3600): jdupes -A -H -r -q /hdd/files Run 0 took 268.97 Run 1 took 270.07 Run 2 took 268.52 Run 3 took 268.95 Run 4 took 269 AVERAGE TIME: 269.102 -----[ dupd-hdd : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 46.26 Running 5 times (timeout=3600): dupd scan -q -p /hdd/files Run 0 took 46.37 Run 1 took 46.43 Run 2 took 46.24 Run 3 took 46.68 Run 4 took 46.62 AVERAGE TIME: 46.468 -----[ rdfind : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 86.59 Running 5 times (timeout=3600): rdfind -n true /hdd/files Run 0 took 86.48 Run 1 took 87.02 Run 2 took 86.55 Run 3 took 86.57 Run 4 took 86.75 AVERAGE TIME: 86.674 -----[ fslint : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 103.29 Running 5 times (timeout=3600): findup /hdd/files Run 0 took 103.49 Run 1 took 103.64 Run 2 took 102.97 Run 3 took 103.16 Run 4 took 103.28 AVERAGE TIME: 103.308 -----[ fdupes : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 276.02 Running 5 times (timeout=3600): fdupes -A -H -r -q /hdd/files Run 0 took 276.88 Run 1 took 276.18 Run 2 took 276.83 Run 3 took 277.87 Run 4 took 276.99 AVERAGE TIME: 276.95 -----[ duff : CACHE CLEARED EACH RUN : /hdd/files]------ Running one untimed scan first... Result/time from untimed run: 935.56 Running 5 times (timeout=3600): duff -r -z /hdd/files Run 0 took 936.06 Run 1 took 936.87 Run 2 took 936.58 Run 3 took 937.01 Run 4 took 935.95 AVERAGE TIME: 936.494 -----[ rmlint : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 18.62 Running 5 times (timeout=3600): rmlint -o fdupes /ssd/files Run 0 took 6.38 Run 1 took 6.33 Run 2 took 6.3 Run 3 took 6.32 Run 4 took 6.32 AVERAGE TIME: 6.33 -----[ jdupes : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 35.12 Running 5 times (timeout=3600): jdupes -A -H -r -q /ssd/files Run 0 took 6.89 Run 1 took 6.84 Run 2 took 6.88 Run 3 took 6.83 Run 4 took 6.91 AVERAGE TIME: 6.87 -----[ dupd-hdd : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 19.85 Running 5 times (timeout=3600): dupd scan -q -p /ssd/files Run 0 took 3.34 Run 1 took 3.17 Run 2 took 3.25 Run 3 took 3.3 Run 4 took 3.29 AVERAGE TIME: 3.27 -----[ rdfind : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 31.43 Running 5 times (timeout=3600): rdfind -n true /ssd/files Run 0 took 8.5 Run 1 took 8.38 Run 2 took 8.42 Run 3 took 8.39 Run 4 took 8.38 AVERAGE TIME: 8.414 -----[ fslint : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 44.67 Running 5 times (timeout=3600): findup /ssd/files Run 0 took 20.63 Run 1 took 20.58 Run 2 took 20.54 Run 3 took 20.54 Run 4 took 20.53 AVERAGE TIME: 20.564 -----[ fdupes : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 42.13 Running 5 times (timeout=3600): fdupes -A -H -r -q /ssd/files Run 0 took 15.68 Run 1 took 15.52 Run 2 took 15.53 Run 3 took 15.56 Run 4 took 15.54 AVERAGE TIME: 15.566 -----[ duff : CACHE KEPT : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 32.54 Running 5 times (timeout=3600): duff -r -z /ssd/files Run 0 took 7 Run 1 took 6.96 Run 2 took 6.98 Run 3 took 6.95 Run 4 took 6.95 AVERAGE TIME: 6.968 -----[ rmlint : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 17.39 Running 5 times (timeout=3600): rmlint -o fdupes /ssd/files Run 0 took 17.29 Run 1 took 17.21 Run 2 took 17.25 Run 3 took 17.24 Run 4 took 17.31 AVERAGE TIME: 17.26 -----[ jdupes : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 34.36 Running 5 times (timeout=3600): jdupes -A -H -r -q /ssd/files Run 0 took 34.3 Run 1 took 34.35 Run 2 took 34.48 Run 3 took 34.34 Run 4 took 34.36 AVERAGE TIME: 34.366 -----[ dupd-hdd : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 19.7 Running 5 times (timeout=3600): dupd scan -q -p /ssd/files Run 0 took 19.67 Run 1 took 19.65 Run 2 took 19.66 Run 3 took 19.65 Run 4 took 19.51 AVERAGE TIME: 19.628 -----[ rdfind : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 30.93 Running 5 times (timeout=3600): rdfind -n true /ssd/files Run 0 took 30.7 Run 1 took 30.61 Run 2 took 30.72 Run 3 took 30.8 Run 4 took 30.79 AVERAGE TIME: 30.724 -----[ fslint : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 44.41 Running 5 times (timeout=3600): findup /ssd/files Run 0 took 44.23 Run 1 took 44.3 Run 2 took 44.44 Run 3 took 44.24 Run 4 took 44.41 AVERAGE TIME: 44.324 -----[ fdupes : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 42.05 Running 5 times (timeout=3600): fdupes -A -H -r -q /ssd/files Run 0 took 41.79 Run 1 took 41.79 Run 2 took 41.79 Run 3 took 41.8 Run 4 took 41.92 AVERAGE TIME: 41.818 -----[ duff : CACHE CLEARED EACH RUN : /ssd/files]------ Running one untimed scan first... Result/time from untimed run: 32.45 Running 5 times (timeout=3600): duff -r -z /ssd/files Run 0 took 32.49 Run 1 took 32.48 Run 2 took 32.52 Run 3 took 32.49 Run 4 took 32.48 AVERAGE TIME: 32.492